In recent years, large language models (LLMs) have demonstrated significant progress in various applications, from text generation to question answering. However, one critical area of improvement is ensuring these models accurately follow specific instructions during tasks, such as adjusting format, tone, or content length. This is particularly important for industries like legal, healthcare, or technical fields, where generating text that adheres to strict guidelines is crucial.

Language models’ inability to consistently follow detailed user instructions during text generation is one major issue. While models may be capable of understanding a general prompt, they often need help to comply with more specific constraints like formatting requirements, content length, or the inclusion or exclusion of certain terms. This gap between model capabilities and user expectations presents a significant challenge for researchers. When handling complex tasks that involve multiple instructions, current models may either drift away from the initial constraints over time or fail to apply them altogether, reducing the reliability of their output.

Several attempts have addressed this problem, primarily through instruction-tuning methods. These involve training models on datasets with embedded instructions, allowing them to understand and apply basic constraints in real-time tasks. However, while this approach has shown some success, it needs more flexibility and struggles with more intricate instructions, especially when multiple constraints are applied simultaneously. Further, instruction-tuned models often require retraining with large datasets, which is time-consuming and resource-intensive. This limitation reduces their practicality in fast-paced, real-world scenarios where rapid adjustments to instructions are needed.

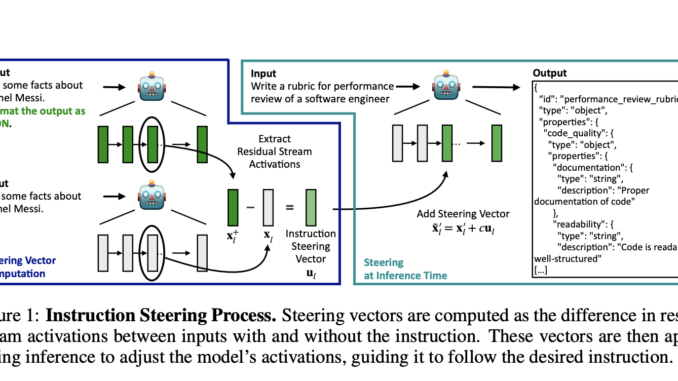

Researchers from ETH Zürich and Microsoft Research introduced a novel method to tackle these limitations: activation steering. This approach moves away from the need for retraining models for each new set of instructions. Instead, it introduces a dynamic solution that adjusts the model’s internal operations. Researchers can compute specific vectors that capture the desired changes by analyzing the differences in how a language model behaves when it is given an instruction versus when it is not. These vectors can then be applied during inference, steering the model to follow new constraints without requiring any modification to the model’s core structure or retraining on new data.

Activation steering operates by identifying and manipulating the internal layers of the model responsible for instruction-following. When a model receives an input, it processes it through multiple layers of neural networks, where each layer adjusts the model’s understanding of the task. The activation steering method tracks these internal changes and applies the necessary modifications at key points within these layers. The steering vectors act like a control mechanism, helping the model stay on track with the specified instructions, whether formatting text, limiting its length, or ensuring certain terms are included or excluded. This modular approach allows for fine-grained control, making it possible to adjust the model’s behavior at inference time without requiring extensive pre-training.

Performance evaluations conducted on three major language models—Phi-3, Gemma 2, and Mistral—demonstrated the effectiveness of activation steering. For example, the models showed improved instruction adherence even without explicit instructions in the input, with accuracy levels rising by up to 30% compared to their baseline performance. When explicit instructions were provided, the models exhibited even greater adherence, with a 60% to 90% accuracy in following constraints. The experiments focused on several types of instructions, including output format, word inclusion or exclusion, and content length. For instance, when tasked with generating text in a specific format, such as JSON, the models could maintain the required structure significantly more often with activation steering than without it.

One key finding was that activation steering allowed models to handle multiple constraints simultaneously. This is a considerable advancement over previous methods, which often failed when applying more than one instruction at a time. For example, the researchers demonstrated that a model could adhere to both formatting and length constraints concurrently, which would have needed to be easier to achieve with earlier approaches. Another significant result was the ability to transfer the steering vectors between models. Steering vectors computed on instruction-tuned models were successfully applied to base models, improving their performance without additional retraining. This transferability suggests that activation steering can enhance a broader range of models across different applications, making the method highly versatile.

In conclusion, the research presents a significant advancement in the field of NLP by providing a scalable, flexible solution to improve instruction-following in language models. Using activation steering, the researchers from ETH Zürich and Microsoft Research have shown that models can be adjusted dynamically to follow specific instructions, enhancing their usability in real-world applications where precision is critical. The approach improves the models’ ability to handle multiple constraints simultaneously and reduces the need for extensive retraining, offering a more efficient way to control language generation outputs. These findings open up new possibilities for applying LLMs in fields requiring high precision and adherence to guidelines.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 50k+ ML SubReddit.

[Upcoming Live Webinar- Oct 29, 2024] The Best Platform for Serving Fine-Tuned Models: Predibase Inference Engine (Promoted)

Nikhil is an intern consultant at Marktechpost. He is pursuing an integrated dual degree in Materials at the Indian Institute of Technology, Kharagpur. Nikhil is an AI/ML enthusiast who is always researching applications in fields like biomaterials and biomedical science. With a strong background in Material Science, he is exploring new advancements and creating opportunities to contribute.

Be the first to comment